Artificial Neural Network

Published on by robert_super

Feed Forward Artificial Neural Network

https://github.com/GandhiGames/ANN

2 forks.

0 stars.

0 open issues.

Recent commits:

- created Unity 5.4.x project, Robert Wells

- Updated ReadMe, Robert Wells

- Created ReadMe, GandhiGames

- Initial Commit, Robert Wells

I wrote this article a little while back as a bit of background information for a now complete (and as we are dealing with software, most likely out of date) project. However I will be starting a new ‘ai experiments tutorial series’ soon(ish) that will be using neural networks and once started, it would be nice to have access to this information, which may not necessarily fit with the tutorial write up as it may be a bit too sciencey (totally not a word but you know what I mean).

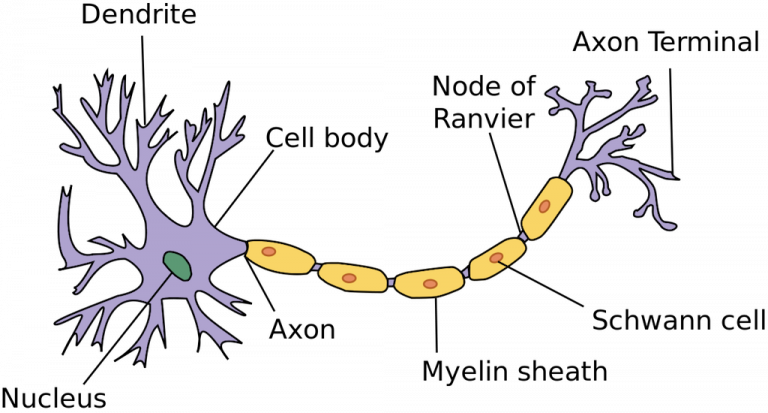

The basic cell in a biological brain is called a neuron (a type of nerve cell). There are around 100 billion in a typical human brain. These neurons are connected to form a biological neural network, with each network having on average 10,000 connections.

Different animals have different number of neurons, for example a mouse has around 75 million neurons and a chimpanzee 7 billion.

The neurons differ based on their size, which neurons they are connected to, and the strengths of their connections with those neurons. The neuron has a relatively simple structure.

The dendrites stimulate the neuron with signals from other neurons. The signals are then transmitted down the axon, which connects to the dendrites of other cells. The cell body has a voltage called an electrical potential, which is changed when signals are received in the form of electro-chemical pulses. They can be excitatory in nature by adding to the electrical potential, or inhibitory by subtracting from the potential.

If the potential exceeds a threshold, the neuron fires and sends an electro-chemical pulse along the axon, and in-turn to other neurons. The neuron then returns to a resting state and waits for its electrical potential to build. If the threshold is not reached, then the neuron does not fire.

Through the process of learning, the connection between neurons are strengthened or weakened. The strengthening of pathways between neurons increases the chance that the next time the same event occurs, the same pathway of neurons are fired. Conversely, the chance is decreased in less used pathways.

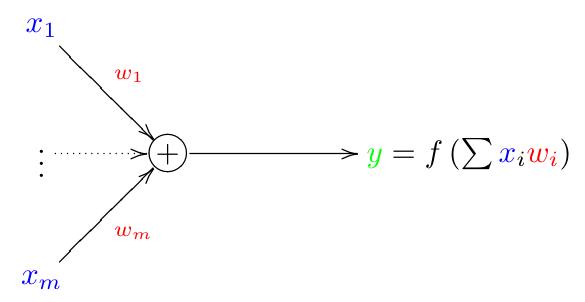

An artificial neural network is composed of a number of building blocks called artificial neurons. These are based on the biological neuron described above. A model of an artificial neuron is shown below.

X1, X2, X3, …, Xm represent the input values. Each input has an associated weight w1, …, wm. These weights encode the knowledge of a network; by applying a set of weights from one neural network to another it will perform the same task in the same manner.

The input into each neuron is multiplied by its associated weight and summed. For example if a neuron has three inputs 1, 3, 2 with associated weights -1, 1, 4, then x = (1-1)+(3*1)+(2*4). If x is above a threshold, the neuron fires and outputs a number.

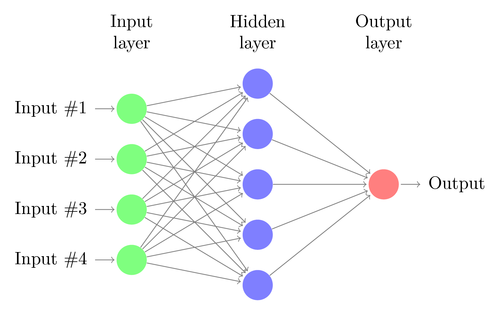

The neurons are organised in a number of layers. A possible organisation is shown below.

The number of neurons in each layer and the number of hidden layers is variable. A neuron connects to every neuron in a previous layer and each connection has a weight.

The input layer is associated with the input variables, the neurons in this layer do not perform any calculation they simply pass the data onto the next layer (usually a hidden layer). A hidden layer is so called because it is hidden from the environment, it accepts no direct input and provides no direct output. There can be any number of hidden layers and is dependent on the application.

When should you use it?

I haven’t often found use of an ANN in a game environment other than applying novel learning to enemy AI. For example, I created a top down shooter where the enemies learn over time using an ANN and a genetic algorithm, to avoid the player and generate different methods of staying alive longer. That project can be found here.

Stanford has information on a number of common neural network applications here. They are also commonly used in artificial life simulations because it attempts to mimic, at an abstract and simplified level, the way a biological brains work.

Each ANN application with require its own setup so unfortunately I cannot provide any detailed instructions. The project does come with an example scene that is very basic but should give you some idea on how to begin to setup the neural network.